AI Engineering

EU AI Act 2026: The New AI Advantage Is the Control Stack

Feb 20, 2026 · 6 min read

Europe is not just regulating AI. It is rewarding teams that can prove traceability, transparency, oversight, and monitoring in the product itself.

Europe is already in implementation mode

Most teams still talk about the EU AI Act as if it begins in 2026. That is already outdated.

As of 9 March 2026, Europe is not waiting for AI governance to start. Some of it is already live.

From 2 February 2025, the prohibited practices rules, the AI system definition, and AI literacy obligations started applying. From 2 August 2025, the timeline moved again with GPAI obligations and governance structures.

That matters because it changes the real question for product teams. The question is no longer:

"Should we start thinking about trust and control later?"

It is:

"Can we show that this system is understandable, controllable, and monitorable now?"

This is not legal advice. It is a product and engineering reading of what the market is starting to reward.

Why 2 August 2026 still matters

There is too much loose talk online about delays. The official Commission timeline is still clear on one thing: 2 August 2026 remains the major application milestone.

That date still matters because it is tied to:

- the broader wave of AI Act application,

- Article 50 transparency obligations,

- the application of Annex III high-risk rules on the official timeline,

- and the start of more visible enforcement and support infrastructure.

The freshest signal is not theoretical. It is happening right now.

On 5 March 2026, the Commission published the second draft of the Code of Practice on marking and labelling AI-generated content. Feedback is open until 30 March 2026, and the final version is expected by early June 2026.

That is why I would not treat transparency as a side topic. If your product includes assistants, synthetic content, AI-generated text, or manipulated media, transparency is becoming product behavior, not just policy language.

The mistake most teams are still making

Many teams hear "regulation" and immediately think "legal memo". Serious teams should hear "system design requirements".

The market question is shifting from:

- "Which model do you use?"

to:

- "How do you control the system when it matters?"

That difference is not abstract. I have felt it in products like Fotelik, where trust depends on provenance, explainability, and when the system should stay quiet instead of sounding clever.

Even outside formal high-risk classification, the engineering pressure is similar:

- you need a source trail,

- you need honest fallback behavior,

- you need a visible boundary between grounded and uncertain output,

- and you need an operating model for when quality drops.

The law is catching up to a product reality that already existed.

If you want a name for the engineering response, I still call it Fortress AI. Not because fear sells. Because controlled systems win.

What is uncertain, and what is not

There is one area where you should stay precise.

On 19 November 2025, the Commission adopted a simplification proposal as part of the Digital Omnibus package. In that proposal, the application of some high-risk rules could shift to later long-stop dates if the proposal is adopted in that form:

- 2 December 2027 for Annex III systems,

- 2 August 2028 for certain Annex I systems under harmonisation legislation.

But the important phrase is: if adopted.

As of 9 March 2026, this is still a proposal under discussion by the Parliament and Council. So the safe reading is:

- some timetable uncertainty exists for parts of high-risk AI,

- but the AI Act did not disappear,

- and the 2026 milestone did not suddenly stop mattering.

Stable facts are still stable:

- AI literacy is already live,

- GPAI obligations are already live,

- Article 50 still points to 2 August 2026,

- and the implementation layer is still actively moving.

If you build your roadmap around the assumption that "everything got delayed anyway," you are building on politics, not on the current operational reality.

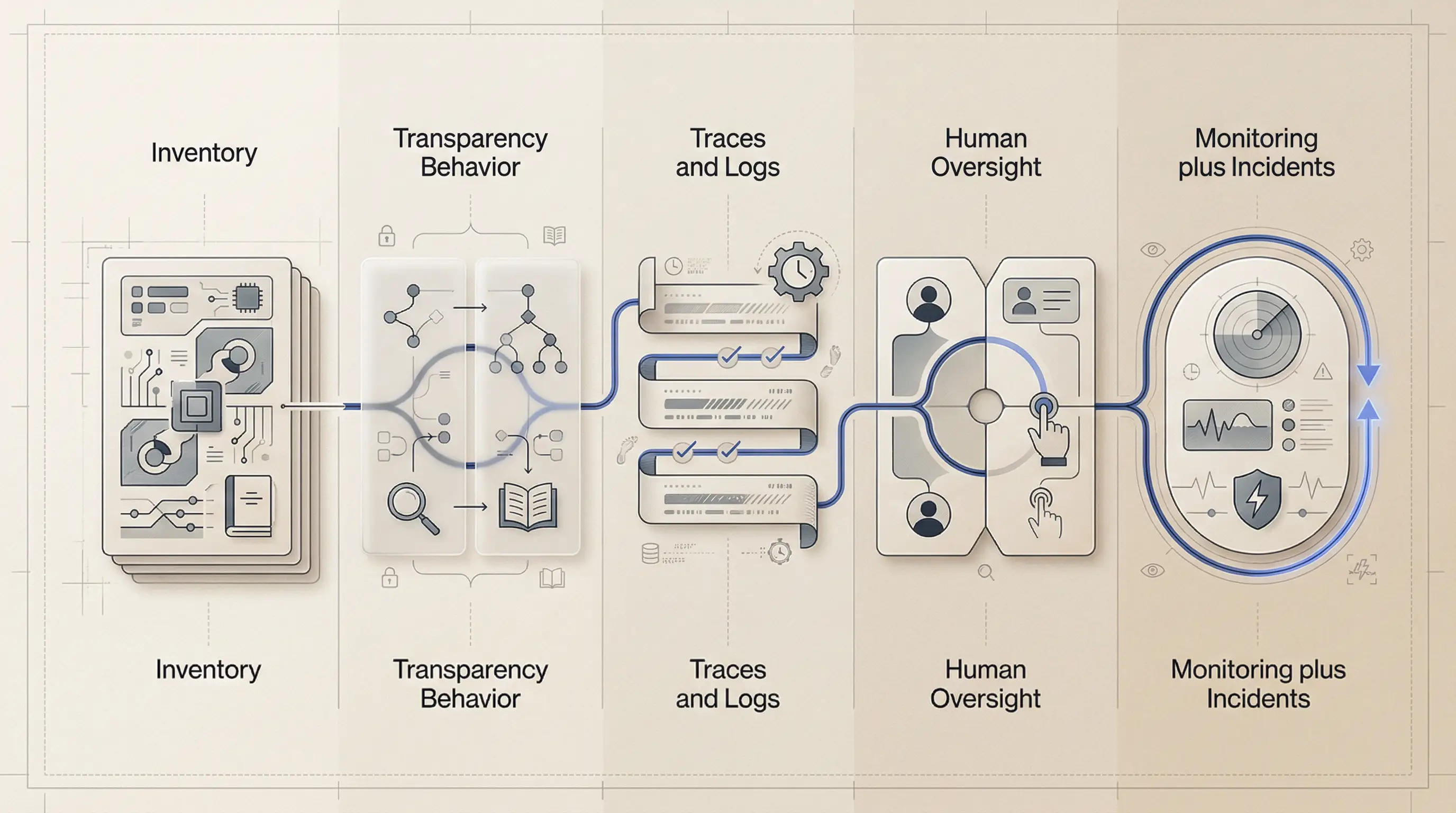

The control stack serious teams need

The strongest teams in Europe will not win because they mention compliance more often. They will win because they can show a concrete control stack around their AI features.

For me, that stack has five layers.

1. Inventory before architecture

First, know where AI actually exists in your product and operations.

That means keeping an inventory of:

- features and internal workflows using AI,

- data entering and leaving the system,

- model and provider choices,

- user impact level,

- and the owner responsible for the feature.

If you cannot enumerate your AI surface, you cannot classify risk, define controls, or explain decisions later.

2. Transparency as product behavior

Article 50 is a good forcing function because it turns transparency into something visible.

This is not just about writing documentation. It is about what the user actually sees:

- when they are interacting with AI,

- when content is synthetic or manipulated,

- what part of the output is grounded,

- and what the system could not verify.

In practice, that means labels, disclosures, source surfaces, refusal states, and plain-language uncertainty. A hidden disclaimer in a help center is not a product strategy.

3. Logs and traces you can actually inspect

For high-risk AI, automatically generated logs are an explicit obligation. But even below that threshold, traces are the difference between control and folklore.

When something goes wrong, you need to answer basic questions fast:

- which model ran,

- which prompt version was used,

- what sources were retrieved,

- what tools were called,

- what the output looked like,

- and how the system resolved the interaction.

Even a thin trace schema changes the quality of your operations:

export type AiTrace = {

traceId: string;

timestamp: string;

feature: string;

model: string;

promptVersion: string;

retrievedSources?: string[];

latencyMs: number;

outcome: "success" | "low_confidence" | "fallback" | "refused" | "error";

};

If you cannot inspect the answer path, you do not really control the system.

4. Human oversight has to exist in the UI

One of the most useful lenses in the AI Act is simple: people should be able to understand limits, notice anomalies, avoid over-reliance, and intervene.

That is not a PDF. That is interface and workflow design.

Real oversight usually looks like:

- review before release in sensitive flows,

- override and correction,

- escalation to a human owner,

- feature-flag or shutdown capability,

- and an audit log of human actions.

This is one reason I think product engineers and strong frontend engineers are underrated in the AI market. They know how to build high-stakes surfaces that remain understandable under pressure.

5. Monitoring and incident paths after launch

The AI Act's post-market monitoring and serious incident logic matters because it forces the right operating habit: launch is not the end of the quality story.

You need a system for watching:

- drift in output quality,

- rising fallback or refusal rates,

- source freshness problems,

- harmful failure patterns,

- and incidents that need formal escalation.

This is why I do not separate compliance from reliability. At the product level, they start to merge.

What buyers will increasingly ask to see

Enterprise buyers are unlikely to ask only:

"Are you compliant?"

More often, they will ask for evidence in a more practical form:

- Show me where AI is used in the product.

- Show me how users are informed.

- Show me one trace of a meaningful answer or decision.

- Show me who can review, override, or stop the system.

- Show me how you monitor quality after launch.

The teams that can answer those questions with artifacts will look stronger than teams with more impressive demos and weaker controls.

That is the shift I care about. Europe is not just creating more policy work. It is creating a market advantage for teams that can turn trust into system behavior.

The real opportunity

The strongest AI products in Europe will not be the ones that feel the most magical in a demo. They will be the ones buyers, operators, and users can believe when the stakes rise.

That is why I think the new advantage is the control stack:

- inventory,

- transparency behavior,

- traces,

- oversight,

- and monitoring.

Not because regulation is exciting. Because trust compounds.

That is the difference between shipping an AI feature and shipping an AI system a serious buyer can sign off on.

Key takeaways

- Europe is already in implementation mode: AI literacy and GPAI obligations are already live.

- 2 August 2026 still matters because Article 50 transparency and the broader application wave are still on the official timeline.

- Possible high-risk timetable shifts are still part of a Commission proposal, not settled law.

- The teams that win will show a control stack: inventory, transparency behavior, traces, oversight, and monitoring.

References

- EU AI Act (Regulation (EU) 2024/1689) — EUR-Lex

- European Commission — Regulatory framework on AI

- European Commission — AI Act implementation timeline

- AI Act Service Desk — Article 4 (AI literacy)

- AI Act Service Desk — Article 50 (transparency obligations)

- European Commission — Second draft code on marking and labelling AI-generated content

- European Commission — AI Act standardisation (Digital Omnibus context)

- European Commission — Navigating the AI Act FAQ

Want this quality level in your product?

I help teams ship AI features that are fast, trustworthy, and production-ready.