AI Engineering

Frontend Did Not Die. It Became My Edge in Product AI

Feb 10, 2026 · 7 min read

I did not move into AI because frontend stopped mattering. I moved because AI products reward the habits demos usually ignore: trust UX, evals, latency, and shipping discipline.

AI did not make my frontend years irrelevant

I did not move into AI because frontend stopped mattering. I moved because product work moved one layer deeper.

For more than a decade I built frontend and full-stack systems. That work taught me how users break flows, how state leaks, how latency changes behavior, and how trust disappears after one bad interaction.

Those are still the problems I care about. The difference is that AI features make them harder.

By 2025 I had what many engineers call a stable path: senior scope, strong compensation, remote work, and a life that looked settled from the outside. But I had already learned earlier in my career how fast "stable" can change. That made me less interested in protecting a label and more interested in understanding where engineering value was moving.

That is how I ended up in product AI. Not as a total reinvention. As a sharper expression of the same instinct: build systems people can actually trust.

The market signal is repricing, not hype

I needed a boring signal, not a slogan. The useful question was never "Is AI big?" The useful question was "Where is the premium moving?"

By January 16, 2026, LinkedIn said AI Engineer stayed the number one role on its U.S. Jobs on the Rise list, and roles requiring AI literacy had grown 70% year over year in the United States. By January 22, 2026, Indeed Hiring Lab reported that nearly 6% of U.S. firms had at least one AI-related job posting in November 2025, and that among firms hiring for AI, more than two in five postings mentioned AI-related skills.

That does not mean everyone needs to become a researcher. It means AI capability is getting priced into more product, engineering, and operating work.

The broader signal points in the same direction. The World Economic Forum's Future of Jobs Report 2025 put AI and big data at the top of the fastest-growing skill groups. The Stanford 2025 AI Index said 78% of organizations were using AI in 2024, up from 55% a year earlier.

Once I read the market that way, the career question changed. It was no longer "Should I leave frontend?" It became "Which parts of my background compound in AI products, and which parts need new layers?"

The wrong reaction is either fear or cosplay

I keep seeing two bad reactions.

The first is fear: "Frontend is dead. I need a total reinvention."

The second is cosplay: "If I learn enough prompt tricks, I can call myself an AI engineer."

Both reactions miss the real shift.

Most companies do not need another person who can produce a flashy demo. They need people who can turn uncertain model behavior into a product surface that is understandable, measurable, and safe enough to ship.

That work sits at the boundary of:

- product judgment,

- system design,

- operational control,

- and user trust.

That boundary is exactly where strong frontend and product engineers can be dangerous in a good way.

Product AI, not demos

This is the line I keep coming back to: Product AI, not demos.

A demo mostly has to prove one thing: "Look, the model can do it."

A product has to prove four harder things:

- the feature solves a real user job,

- the user can tell when to trust it,

- the team can inspect what happened when it goes wrong,

- and the economics still make sense after launch.

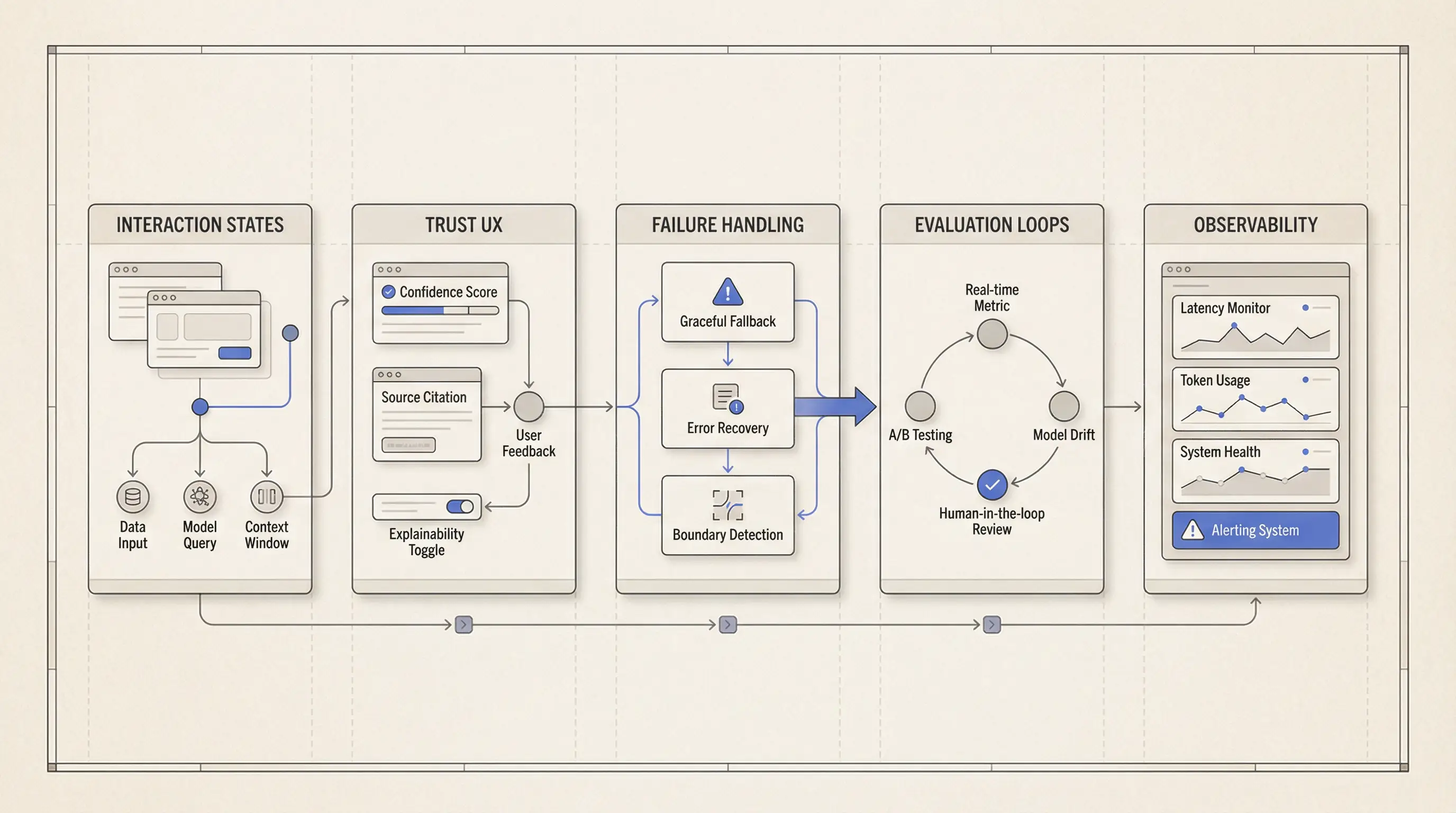

That is why I care about:

- evaluation loops, not only prompts,

- fallback and correction UX, not only happy paths,

- latency and cost budgets, not only model quality,

- source grounding and explainability, not only fluent output,

- release discipline, not only feature velocity.

If you cannot explain the failure modes, you do not own the feature. If you cannot inspect regressions, you do not control the system. If the UI hides uncertainty, users will punish you faster than any benchmark will.

What frontend actually gave me

Frontend did not prepare me to train foundation models. That was never the bet.

It prepared me for the parts of AI product work that break first in reality.

1. Uncertainty as a UX problem

Frontend work trains you to think in partial states: loading, empty, stale, invalid, blocked, retrying, degraded.

AI adds new states, but the habit transfers: low confidence, conflicting sources, tool failure, stale context, partial grounding.

That is why I care so much about trust UX. A useful AI feature does not only answer. It tells the user what it knows, what it could not verify, and what they should do next.

2. Shipping discipline under change

At Common, a lot of the real work was making a complex production frontend safer to change. That meant architecture boundaries, migration safety nets, contract tests, Playwright coverage, and removing dead branches instead of stacking more complexity on top.

AI systems need the same instinct. Prompts change. Models change. Source documents change. Tools change. Without guardrails, every "small improvement" can create a silent regression.

3. Performance and interaction judgment

Frontend engineers learn fast that latency is product design. A few hundred milliseconds can change behavior. A blocking flow gets abandoned. A misleading loading state teaches the wrong expectation.

AI products make this more expensive. Now latency, token cost, and fallback design are tightly connected. You cannot separate product quality from runtime behavior.

4. Human-readable systems

Good frontend work is not only pixels. It is legibility. The user should understand where they are, what happened, and what they can do next.

In AI, the same rule applies to answers, corrections, provenance, permissions, and refusal states. That is why I do not treat explanation as nice polish. In many AI features, explanation is part of the product contract.

The proof is in real systems, not in side quests

The shift became real for me because of the systems I worked on, not because of content about AI.

Common: safe change in a live system

Common is strong proof that I know how to work inside real complexity. The value there is not "AI". It is architecture judgment, migration safety nets, and shippable refactor work inside a live product.

That matters more in AI than many people admit. When the behavior layer is probabilistic, the rest of the product needs to become more legible, not less.

Waku: paid AI is a product system

Waku is the clearest professional proof that AI product work is not just model calls. The hard parts were around monetization, entitlement logic, prompt orchestration, streaming experience, and the delivery workflows around expensive generation.

That taught me a simple lesson: once users pay for AI behavior, trust and control stop being optional.

Fotelik: explainability in a sensitive domain

Fotelik is my strongest independent AI product proof.

I built it around structured extraction, scoring, retrieval, and explainable results in a politically sensitive space. That forced better habits:

- grounding instead of black-box answers,

- schema constraints instead of loose output,

- human-in-the-loop operations instead of blind automation,

- and semantic retrieval that had to serve a user-facing result, not an internal toy demo.

That project made the boundary clear. The hard part was never "can the model say something smart?" The hard part was "can the system produce something a user should trust?"

What I am not betting on

I am not betting that every company needs a giant agent system. I am not betting that prompt engineering becomes its own durable moat. I am not betting that one more generic AI demo will impress serious buyers.

I am betting on a narrower thing:

The premium will go to engineers who can combine:

- product thinking,

- AI system behavior,

- evaluation and observability,

- and user trust under real constraints.

That is also why I do not frame this as a total career reinvention. It is a strategic compression of skills I already had: product sense, frontend rigor, full-stack delivery, and a bias toward operable systems.

I added new layers. I did not abandon the old ones.

What this means if you hire me for AI work

If someone brings me in for AI work, the job is not "make the model talk."

The job is usually some version of this:

- define where AI adds real product value,

- make uncertainty usable in the interface,

- design the retrieval, prompting, or tool boundaries cleanly,

- add evals, traces, and release gates,

- and keep latency, cost, safety, and governance explicit.

That is the work I want more of. It is also the kind of work I want this portfolio to prove.

The bet

I did not bet on AI because frontend stopped mattering. I bet on AI because the next premium is not on interface polish alone. It is on engineers who can connect interface judgment, system control, and model behavior into one product.

Frontend was not the wrong foundation. For the kind of AI systems I want to build, it was one of the best ones.

Key takeaways

- The strongest AI market signal in early 2026 is not hype. It is repricing: more firms and roles now expect AI capability as part of product work.

- Frontend experience transfers well into product AI because it trains you to handle ambiguity, failure states, latency, and user trust.

- The premium is not prompt polish. It is turning uncertain model behavior into a usable and inspectable system.

- Real proof comes from shipped systems with constraints and tradeoffs, not from thin AI side projects.

References

- Indeed Hiring Lab — AI-related skills now appear in more than 2 in 5 postings among firms hiring for AI (January 22, 2026)

- LinkedIn — AI Engineer stayed the #1 U.S. role and AI literacy demand grew 70% YoY (January 16, 2026)

- World Economic Forum — Future of Jobs Report 2025

- Stanford HAI — 2025 AI Index Report

Want this quality level in your product?

I help teams ship AI features that are fast, trustworthy, and production-ready.